How DJs Extract Clean Acapellas Without the Muddy Artifacts

The acapella is one of the most powerful tools in a DJ’s set. A vocal from one track played over the instrumental of another, a cappella elements layered to build tension before a drop, a hook isolated and extended to hold the dancefloor through a transition — all of these techniques require a clean, isolated vocal.

The official acapella sites have limited catalogs. Most tracks that DJs want to mix never had official acapellas released. And DIY extraction from full mixes has historically produced results that DJs describe the same way: muddy, phasey, with enough instrumental bleed that using it in a professional set exposes the seams.

What actually produces a clean acapella from a full mix, and what quality level is realistically achievable with current tools?

Why DIY Acapella Extraction Has Historically Sounded Bad?

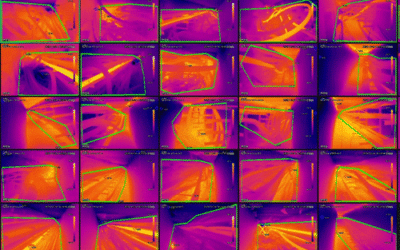

Traditional phase cancellation — subtracting one channel of a stereo mix from the other to remove the center-panned content that includes the vocal — works on mono recordings and partially on poorly-mastered stereo releases. On modern mastered tracks with width processing applied to the entire mix including the vocal, phase cancellation produces a degraded, hollow-sounding result that removes some vocal content while leaving artifacts from the cancellation process.

The fundamental problem is that phase cancellation treats audio as a mathematical operation when audio is a physical phenomenon. It can cancel signal content that’s phase-coherent across both channels. It can’t separate content that genuinely occupies the same frequency space as the instruments around it.

AI separation takes a different approach. Rather than using phase mathematics, it uses pattern recognition — learning what vocal content looks like spectrally and behaviorally, and separating it based on those learned patterns.

Phase cancellation removes what’s in the center. AI separation removes what sounds like everything except the voice.

What Quality Is Actually Achievable With AI Vocal Extraction?

A stem splitter applied to a professionally produced track produces vocal separation that’s usable in a professional context for most tracks. The remaining artifacts are typically: faint bleed from instruments that occupy similar frequency space as the vocal, reverb from the original mix that was applied to the vocal and can’t always be fully separated, and occasional tonal changes in the vocal frequency balance from the separation process.

For DJ use, these artifact levels are workable in many contexts. The key distinction is between:

Contexts where artifact level matters less: DJ mixes where the extracted vocal is layered over new production, where the original instrumentation isn’t present to create a phase conflict, or where the vocal is used as a brief accent rather than a sustained presence.

Contexts where artifact level matters more: Direct A/B comparison with the original track, situations where the extracted vocal is played in isolation without masking from the new production, or club systems with very high-resolution playback that reveal artifacts that aren’t audible on smaller systems.

Understanding which context applies to your use case determines the preparation your extracted acapella needs before use.

How to Get the Best Vocal Extraction for DJ Use?

Use the highest quality source file available. An ai stem splitter processing a high-quality lossless source will produce better separation than one processing a heavily compressed MP3. If the track is available as a FLAC or WAV download, use that for extraction rather than a streaming rip.

Process the extracted vocal stem before using it in a mix. After extraction, apply de-reverb processing to reduce the reverb tail from the original mix that may have bled into the vocal stem. Light EQ to restore any tonal balance issues from the separation process. Subtle saturation if the extraction has left the vocal sounding thinned. These steps take a raw extracted stem to mix-ready quality.

Layer the extracted vocal over ambient content to mask artifacts. The most common and most forgiving use case for AI-extracted vocals is layering them over a new ambient bed — a subtle pad, a drone, the opening of the next track’s breakdown. This masking context makes artifact-level bleed inaudible in the mix.

Test the extraction on your club system before the night. Artifacts that are inaudible on studio monitors may become audible on a large club system with substantial low-frequency output. Always test your extracted acapellas at volume before using them in a live context.

Frequently Asked Questions

Why has traditional phase cancellation produced poor acapella results?

Phase cancellation subtracts one channel of a stereo mix from the other to remove center-panned content including the vocal. On modern mastered tracks with width processing applied to the entire mix including the vocal, this produces a degraded, hollow-sounding result — it can cancel signal content that’s phase-coherent across both channels, but it can’t separate content that genuinely occupies the same frequency space as the instruments around it. AI separation takes a fundamentally different approach: pattern recognition that learns what vocal content looks like spectrally and behaviorally, separating it from everything else based on those learned patterns.

What artifact level should DJs expect from AI vocal extraction?

AI stem separation of a professionally produced track produces faint bleed from instruments occupying similar frequency space as the vocal, reverb from the original mix that can’t always be fully separated, and occasional tonal changes in the vocal frequency balance. These artifact levels are workable in most DJ contexts — especially when the extracted vocal is layered over new production where original instrumentation isn’t present to create phase conflict, or when used as a brief accent rather than a sustained presence. Contexts where artifacts matter more include direct A/B comparison with the original or club systems with high-resolution playback.

How should DJs prepare an AI-extracted vocal stem for use in a live set?

Use the highest quality source file available (lossless WAV or FLAC rather than a compressed MP3) since separation quality degrades with the source. After extraction, apply de-reverb processing to reduce the reverb tail from the original mix, light EQ to restore tonal balance, and subtle saturation if the vocal sounds thinned. Layer the extracted vocal over ambient content — a subtle pad, a drone, or the opening of the next track’s breakdown — to mask artifacts. Always test extracted acapellas at volume on your club system before the night, since artifacts inaudible on studio monitors may become audible at large-system scale.